深层神经网络编程作业2

导包

1 | import time |

加载数据

1 | train_x_orig, train_y, test_x_orig, test_y, classes = load_data() |

改变数据形状

1 | train_x_flatten = train_x_orig.reshape(train_x_orig.shape[0], -1).T |

)

)

初始化每层神经元数

1 | n_x = num_px*num_px*3 |

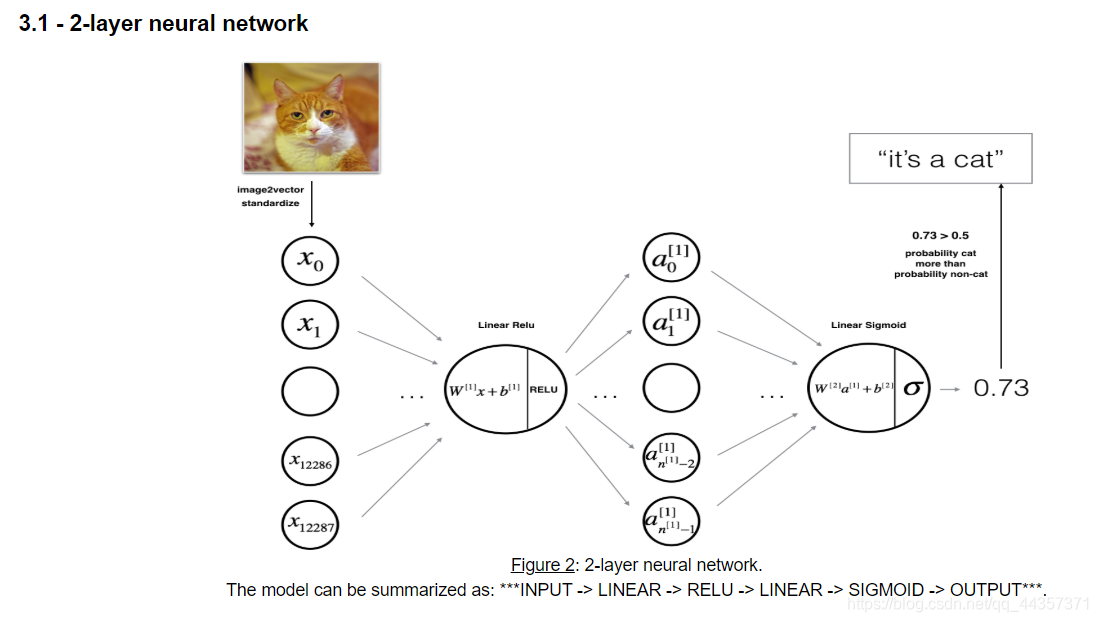

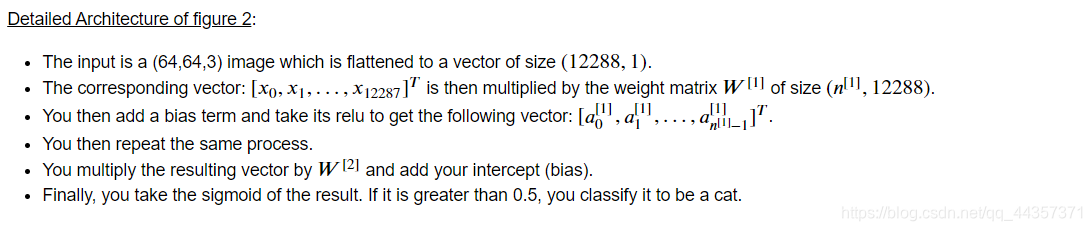

双层神经网络模型

1 | def two_layer_model(X, Y, layer_dims, learning_rate = 0.0075, num_iterations=3000, print_cost=False): |

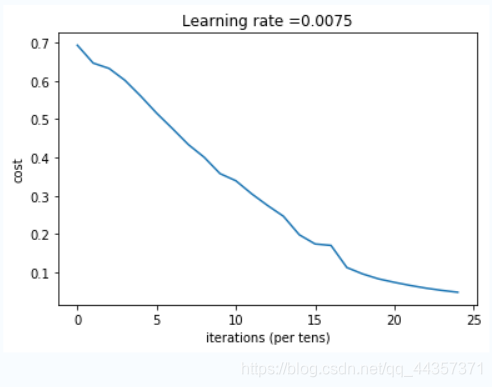

1 | parameters = two_layer_model(train_x, train_y, layer_dims = (n_x, n_h, n_y), num_iterations = 2500, print_cost=True) |

Cost after iteration 0: 0.6930497356599888

Cost after iteration 100: 0.6464320953428849

Cost after iteration 200: 0.6325140647912677

Cost after iteration 300: 0.6015024920354665

Cost after iteration 400: 0.5601966311605747

Cost after iteration 500: 0.5158304772764729

Cost after iteration 600: 0.47549013139433255

Cost after iteration 700: 0.43391631512257495

Cost after iteration 800: 0.4007977536203886

Cost after iteration 900: 0.3580705011323798

Cost after iteration 1000: 0.3394281538366412

Cost after iteration 1100: 0.3052753636196264

Cost after iteration 1200: 0.2749137728213015

Cost after iteration 1300: 0.24681768210614846

Cost after iteration 1400: 0.19850735037466108

Cost after iteration 1500: 0.17448318112556654

Cost after iteration 1600: 0.17080762978096023

Cost after iteration 1700: 0.11306524562164728

Cost after iteration 1800: 0.09629426845937154

Cost after iteration 1900: 0.08342617959726861

Cost after iteration 2000: 0.07439078704319084

Cost after iteration 2100: 0.06630748132267932

Cost after iteration 2200: 0.05919329501038171

Cost after iteration 2300: 0.053361403485605564

Cost after iteration 2400: 0.04855478562877018

1 | predictions_train = predict(train_x, train_y, parameters) |

Accuracy: 0.9999999999999998

Accuracy: 0.72L层神经网络

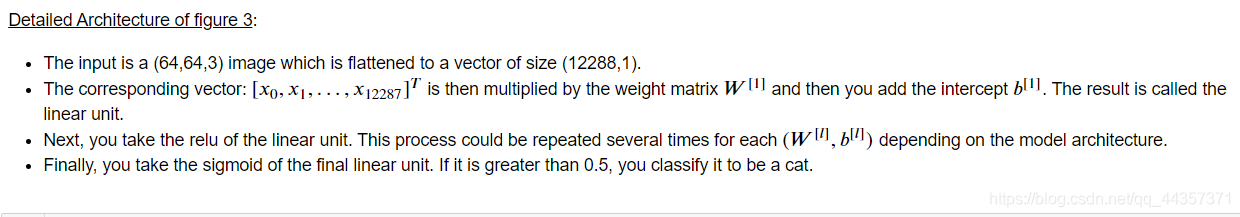

1 | layers_dims = [12288, 20, 7, 5, 1] |

1 | def L_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False): |

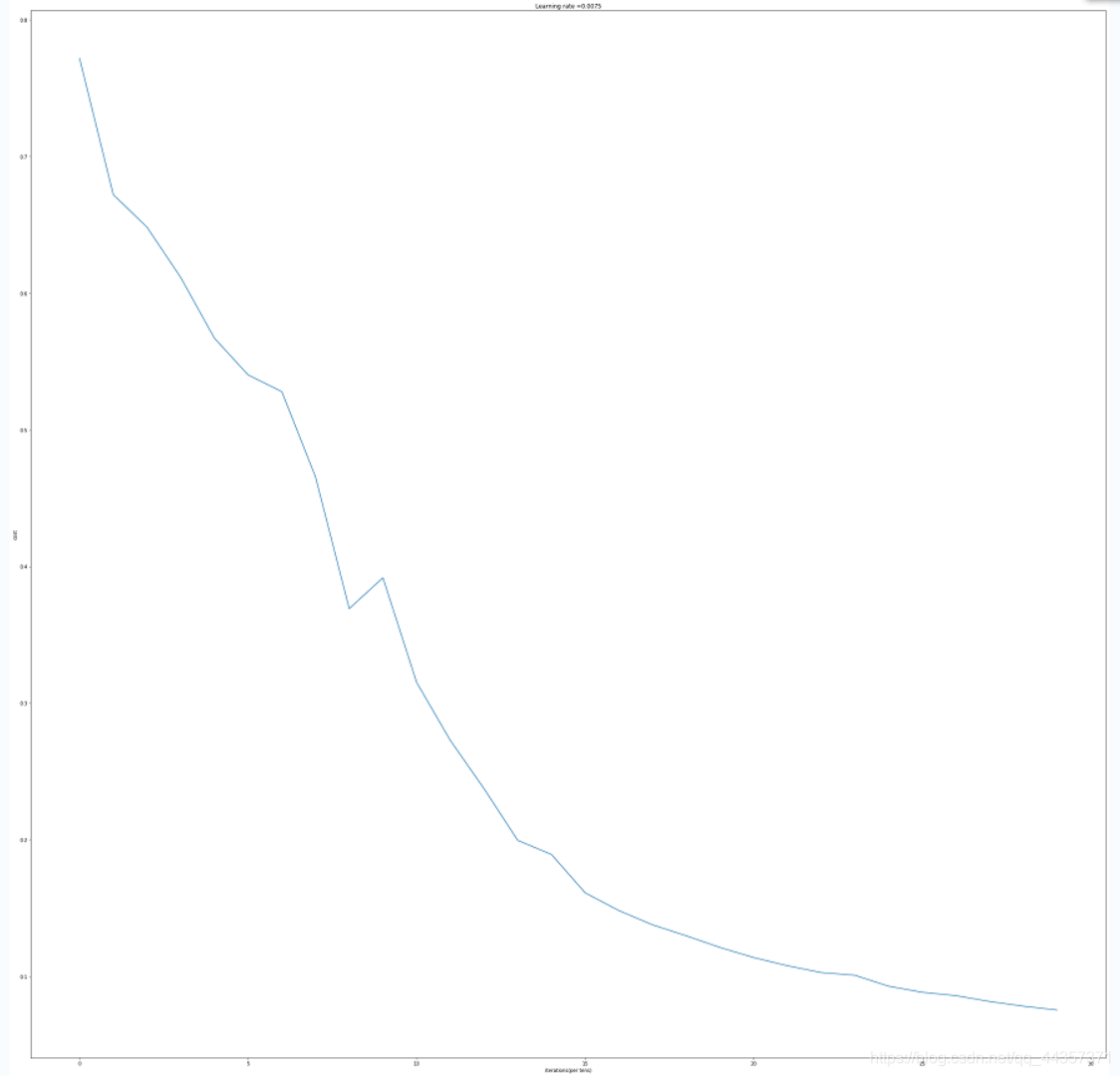

1 | parameters = L_layer_model(train_x, train_y, layers_dims, learning_rate=0.0075, num_iterations=3000, print_cost=True) |

Cost after iteration 0: 0.771749

Cost after iteration 100: 0.672053

Cost after iteration 200: 0.648263

Cost after iteration 300: 0.611507

Cost after iteration 400: 0.567047

Cost after iteration 500: 0.540138

Cost after iteration 600: 0.527930

Cost after iteration 700: 0.465477

Cost after iteration 800: 0.369126

Cost after iteration 900: 0.391747

Cost after iteration 1000: 0.315187

Cost after iteration 1100: 0.272700

Cost after iteration 1200: 0.237419

Cost after iteration 1300: 0.199601

Cost after iteration 1400: 0.189263

Cost after iteration 1500: 0.161189

Cost after iteration 1600: 0.148214

Cost after iteration 1700: 0.137775

Cost after iteration 1800: 0.129740

Cost after iteration 1900: 0.121225

Cost after iteration 2000: 0.113821

Cost after iteration 2100: 0.107839

Cost after iteration 2200: 0.102855

Cost after iteration 2300: 0.100897

Cost after iteration 2400: 0.092878

Cost after iteration 2500: 0.088413

Cost after iteration 2600: 0.085951

Cost after iteration 2700: 0.081681

Cost after iteration 2800: 0.078247

Cost after iteration 2900: 0.075444

1 | predict_train = predict(train_x, train_y, parameters) |

Accuracy: 0.9904306220095691

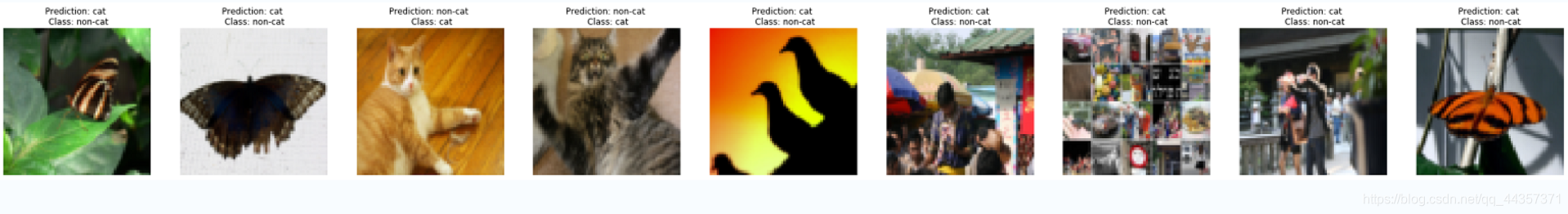

Accuracy: 0.82000000000000011 | print_mislabeled_images(classes, test_x, test_y, predict_test) |

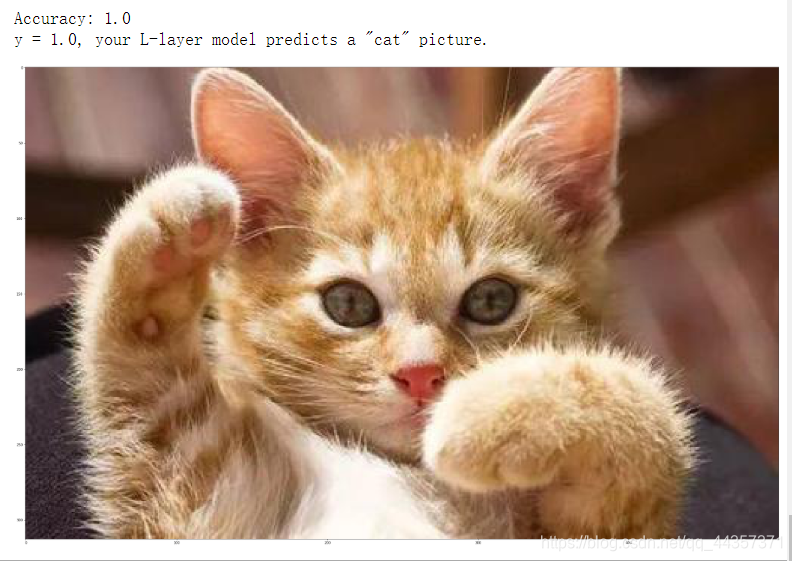

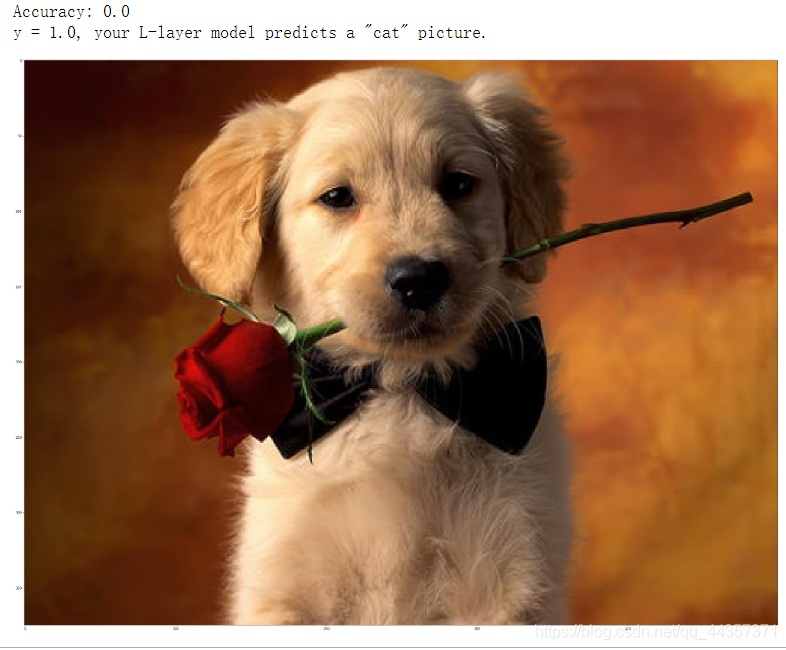

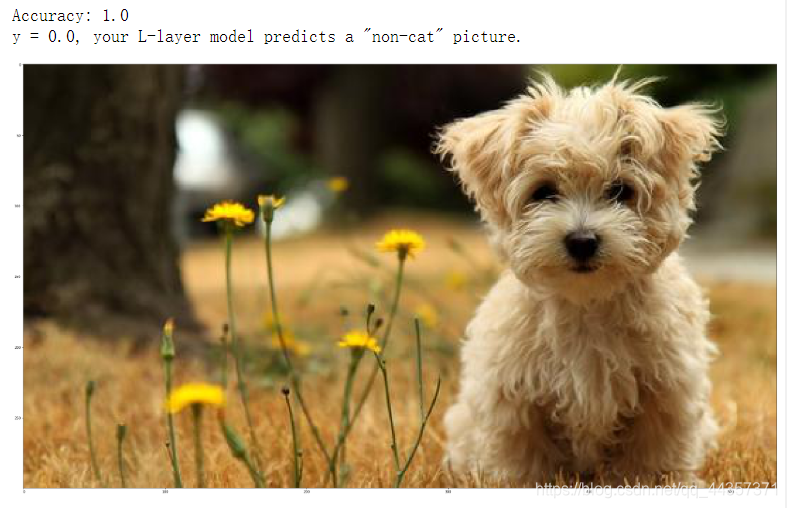

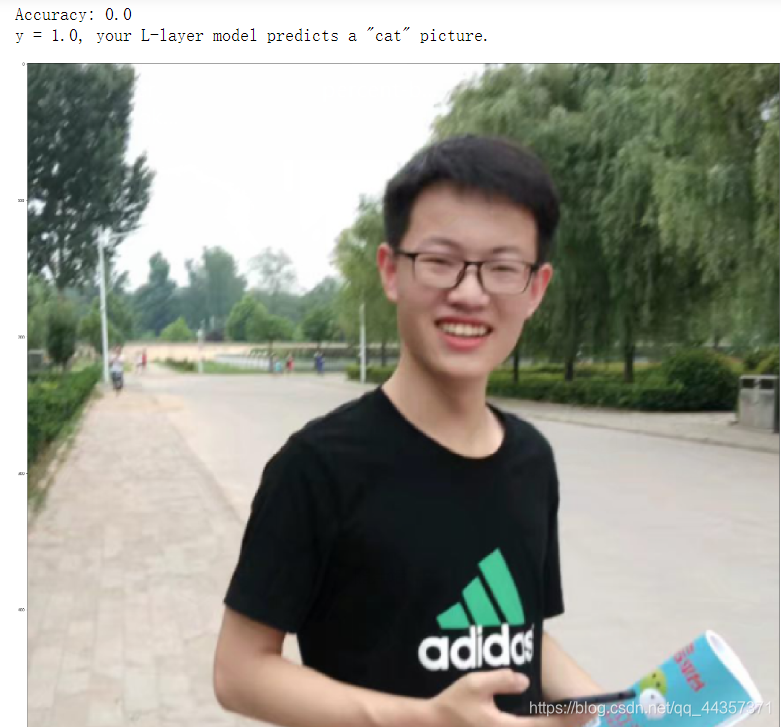

测试自己的照片

1 | my_image = "cat1.jpg" |

转载请注明来源,欢迎对文章中的引用来源进行考证,欢迎指出任何有错误或不够清晰的表达。可以在下面评论区评论,也可以邮件至 2470290795@qq.com

文章标题:深层神经网络编程作业2

文章字数:897

本文作者:runze

发布时间:2020-02-16, 17:11:47

最后更新:2020-02-23, 08:29:54

原始链接:http://yoursite.com/2020/02/16/%E5%90%B4%E6%81%A9%E8%BE%BE%20%E6%B7%B1%E5%BA%A6%E5%AD%A6%E4%B9%A0/01%E7%A5%9E%E7%BB%8F%E7%BD%91%E7%BB%9C%E5%92%8C%E6%B7%B1%E5%BA%A6%E5%AD%A6%E4%B9%A0/%E6%B7%B1%E5%B1%82%E7%A5%9E%E7%BB%8F%E7%BD%91%E7%BB%9C%E7%BC%96%E7%A8%8B%E4%BD%9C%E4%B8%9A2/版权声明: "署名-非商用-相同方式共享 4.0" 转载请保留原文链接及作者。